Aiming at AI market giants like Tesla and Nvidia, AI Vector Vision recently unveiled its “revolutionary” vector ecosystem designed to transform unstructured data into precise, scalable intelligence.

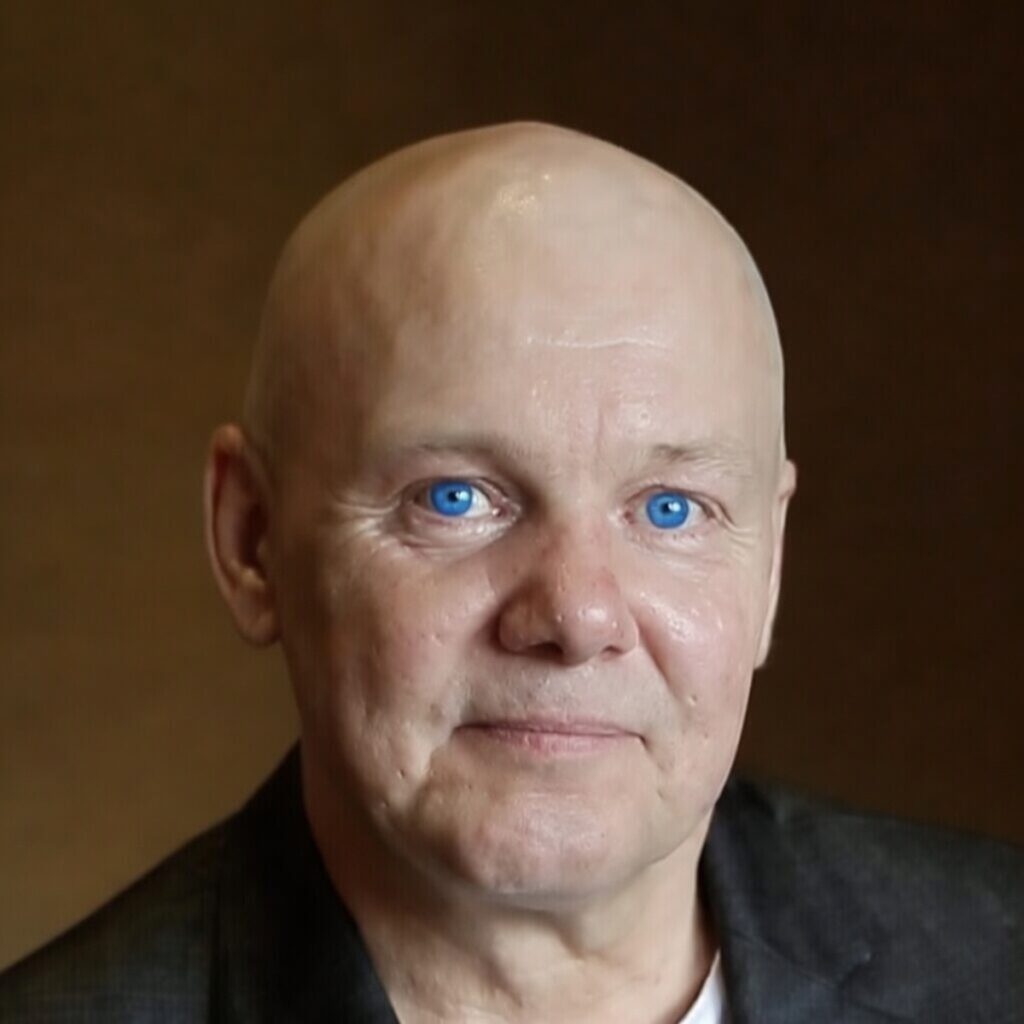

At the heart of this tech is the AI Vector Engine (AIVE), the world’s first 100% lossless raster-to-vector graphics converter. AIVE outperforms industry leaders like Adobe Illustrator and ChatGPT in fidelity, artifact handling, and efficiency, said Cisco Schipperheijn, CEO of New York-based AI Vector Vision, in an interview with GamesBeat.

He said it empowers AI agents with “watch and learn” capabilities, enabling real-time screen monitoring and adaptive learning from visual data, with transformative applications in robotics for enhanced vision and autonomous decision-making. The tech will be captured in the Lucid Visual AI model.

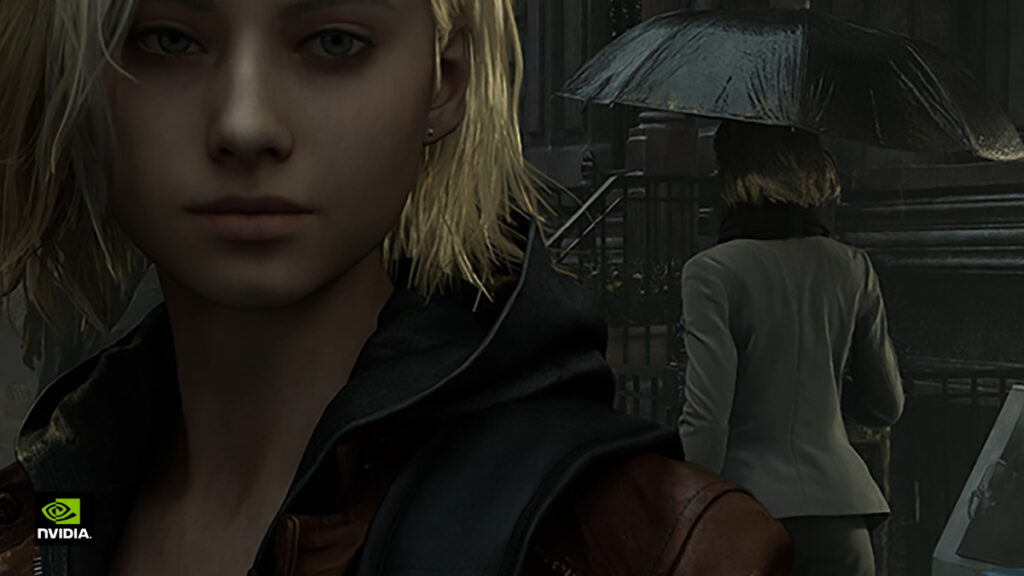

And the company has noted that it can fix the unrealism that fans and game developers complained about when they saw Nvidia’s latest DLSS 5 game graphics tech, which was intended to use generative AI to improve the quality of game characters and objects with better shadows and lighting. Instead, observers reacted negatively to the AI’s fidelity. I spoke with Schipperheijn about the technology, which has been in the works for a very long time.

(Jensen Huang, CEO of Nvidia, said in a press Q&A that gamers were wrong about this one, but the hatred gamers have for the tech and AI itself is pretty obvious; Nvidia did not respond to our request for further comment).

“Imagine empowering robotics leaders like Tesla’s Optimus or Nvidia’s simulation platforms with seamless integrations that convert raster camera feeds into structured vectors in real- time,” said Schipperheijn. “This enables AI agents to monitor, learn, and adapt with unprecedented precision, reducing latency and compute demands while unlocking new levels of autonomy in everything from manufacturing to exploration.”

This launch comes at a critical time, as AI demands for efficient, verifiable data soar. Early

results have reported significant reductions in file sizes and faster processing in visual tasks, aligning with global sustainability goals amid rising compute costs, he said.

AI Vector Vision said it is committed to open innovation, with plans to release developer tools and APIs for the ecosystem in Q2 2026, said Schipperheijn. He just started telling the world about AIVE, and and he’s gotten some curiosity from a graphics expert.

“It’s a novel approach using LLM techniques to convert a pixelated raster image into smooth, geometrically correct line segments and mathematically correct curves,” said graphics expert Jon Peddie, founder of Jon Peddie Research, in a message to GamesBeat. “Just as DLSS uses AI to interpolate intermediate frames. But don’t limit your thinking to just drawing lines with a plotter or on a screen; it can create boundaries that then can be tested for intersections and collisions with other geometric shapes. It looks to be a very promising new tool, and will find a home in CAD, simulation, and visualization.”

And Ted Pollak, an analyst at Jon Peddie Research who was briefed on the tech, also said in a message to GamesBeat, “In speaking with Francisco (Cisco) Schipperheijn, my understanding is that the ability to convert raster graphics to lossless vector mathematics will save triple-A game developers a third of their graphics development burden in many scenarios. If true in practice, this would represent a revolutionary advancement in game development efficiency.”

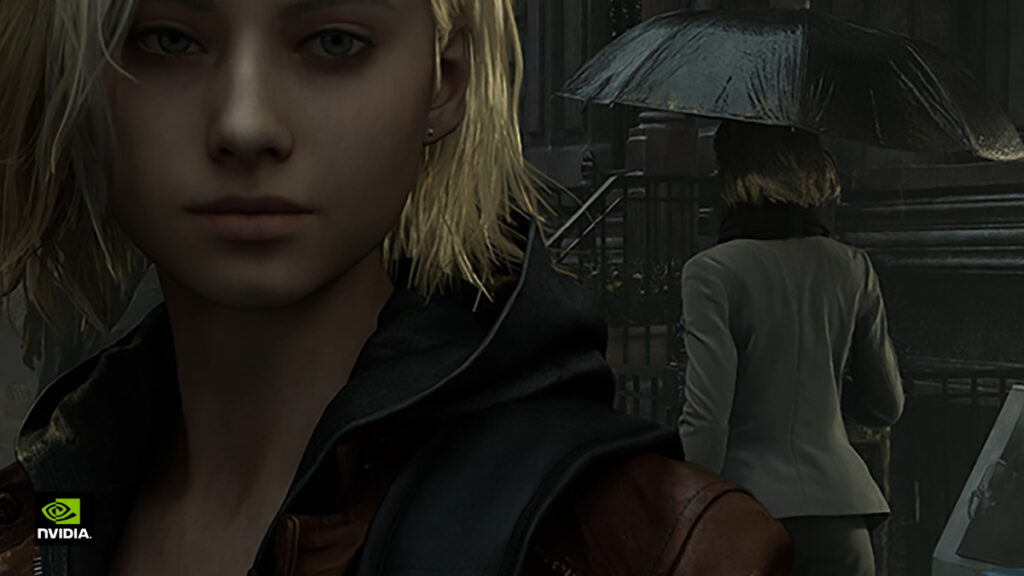

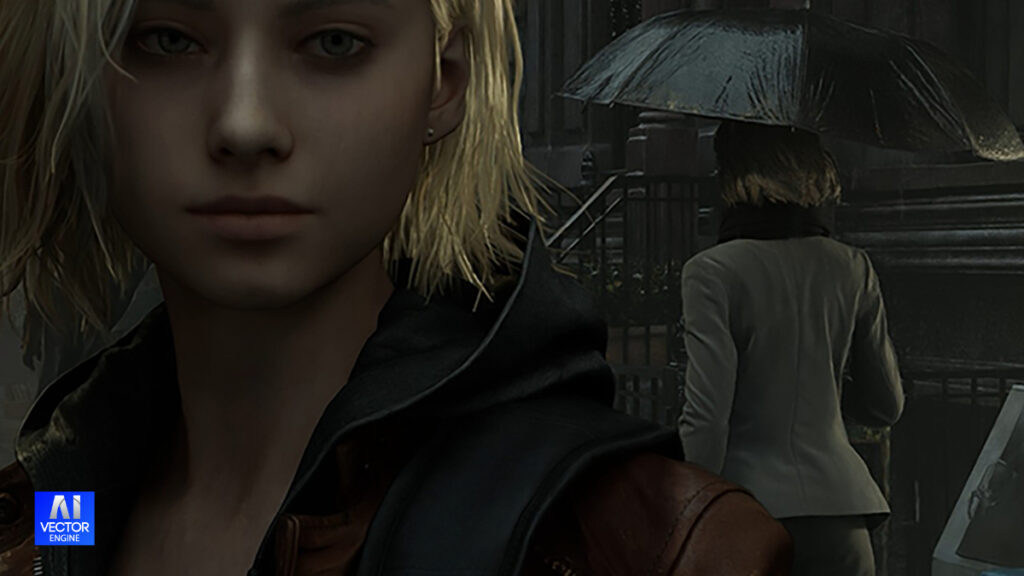

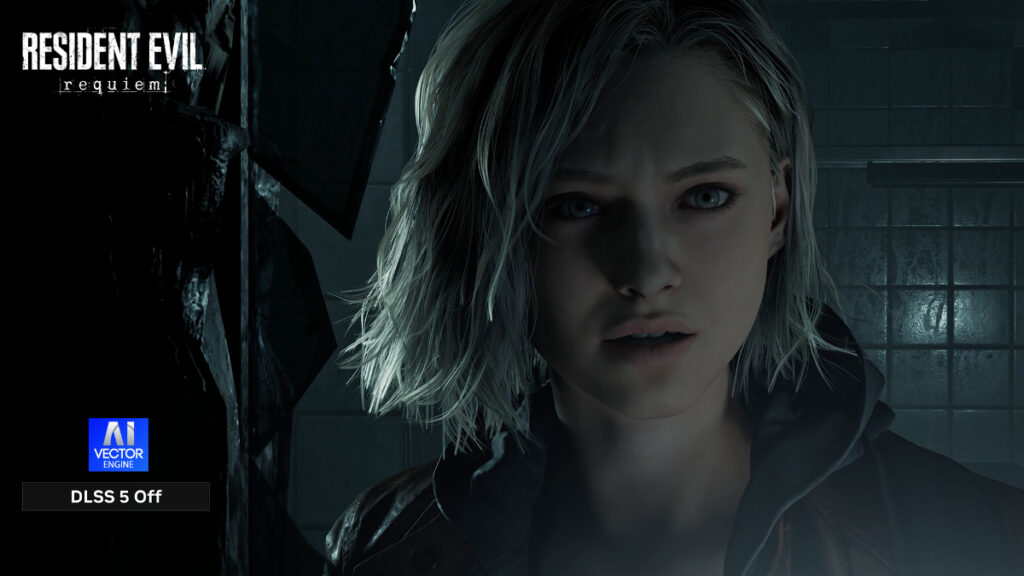

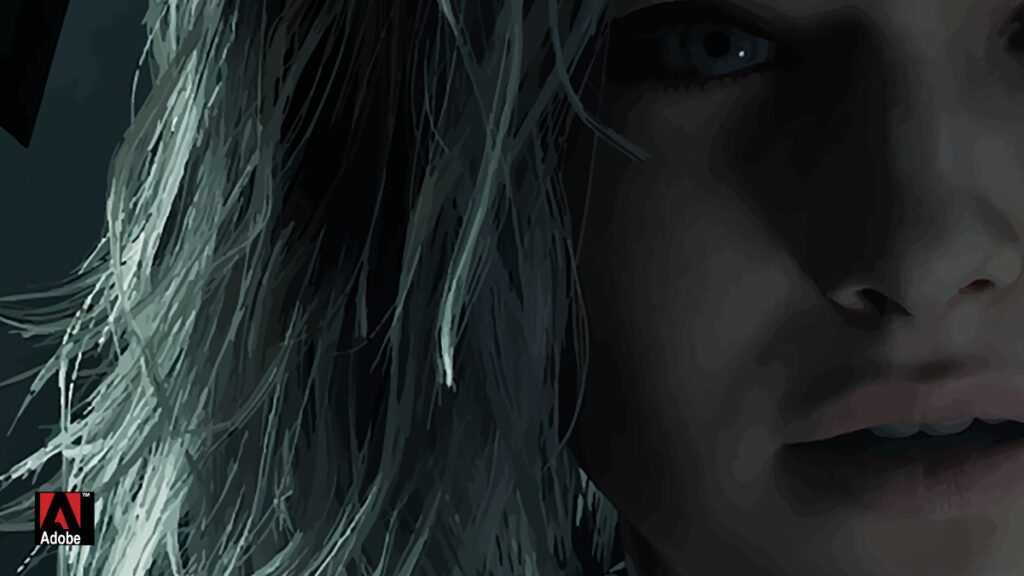

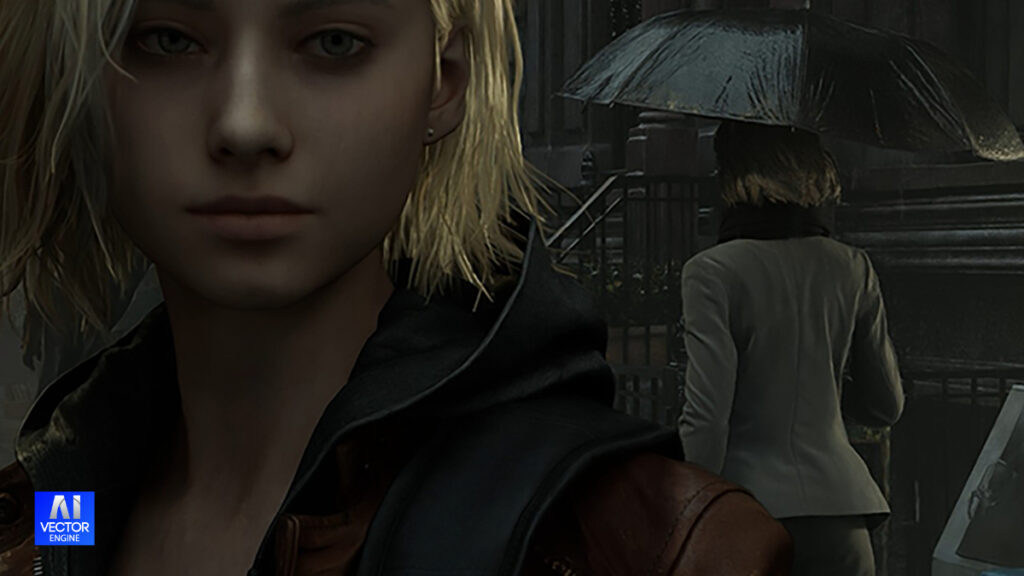

Schipperheijn said it can also help with video game image quality. He said the images below show what’s possible. Basically, these images show better quality for AIVE based on the imagery that Nvidia showed when it announced that DLSS 5 could provide better graphics quality.

Resident Evil – Requiem: AIVE vs Adobe Illustrator Head-to-Head Comparison DLSS 5 Off vs DLSS 5 On – 100% and 300% Zoom

AIVE Vector Engine – 100% Lossless Fidelity

Schipperheijn said that independent Python evaluation scripts confirmed 100% exact match on both RGB checksum and pixel-level mapping for every AIVE vector conversion in this Resident Evil Requiem test.

He said this perfect fidelity is exactly what AIVE was designed for: creating mathematically precise, structured vector visual data for databases and next-generation pipelines. Because AIVE achieves true lossless conversion, the output doesn’t just look “good” — it looks photorealistic when the source is photorealistic, and it stays true to the artist’s intended style when the source is stylized or game-like.

1. Source Image – 100% Zoom (DLSS 5 Off) Original Resident Evil – Requiem game frame with DLSS 5 disabled. This is the ground-truth reference showing natural skin tones, detailed facial features, and the moody cinematic atmosphere of the scene.

In these tests he said we can see clear proof:

- The raw source image without Nvidia DLSS-5 (older game rendering style) is replicated with perfect accuracy by AIVE.

- The DLSS-5 processed version is also copied faithfully as a clean vector.

- No matter whether the input is realistic or artistic, AIVE preserves the creator’s vision exactly.

Key Advantages for Game Development

You can use traditional raster-based 3D and 2D tools in your pipeline, then export the final result to SVG using AIVE, Schipperheijn said.

AIVE Vector equations can be dropped directly into the Unreal Engine as native vector assets, enabling seamless integration with Nanite, Lumen, and other Unreal systems.

SVG format opens the door to advanced graphic editing tools (coming soon) while keeping the data lightweight and future-proof.

The film-quality, natural real-world look captured by AIVE can serve as a high-fidelity training base for other AI graphic systems.

Unique lighting and shading from a movie or game can be extracted as a consistent “lighting-shading theme,” ensuring mathematically accurate visual consistency across an entire title.

Powerful Integration Possibilities AIVE can function as:

- A corrective mask layer applied alongside or inside Nvidia DLSS-5 to reduce artificial artifacts and restore more natural real-world output.

- A pre-production step to convert all game assets into precise vector format.

New Revenue & Value Opportunities in Gaming

Schipperheijn said AIVE opens exciting new monetization and production pathways in these ways:

Player-Created Assets: It allows gamers to upload real-world photos (selfies, pets, cars, etc.), vectorize them with AIVE, and turn them into high-fidelity in-game items, skins, or avatars that players can purchase or unlock.

Hollywood-to-Game Asset Conversion: Studios can license and vectorize iconic scenes, characters, or props from Hollywood films, then seamlessly integrate them as playable game assets — creating official movie-accurate DLC or expansions with perfect visual fidelity.

Premium Vector Asset Marketplace: Create a marketplace where developers and creators sell ready-to-use AIVE vector packs (environments, characters, lighting themes) that maintain cinematic quality while being lightweight and editable.

Enhanced Modding & User-Generated Content: Enable modders to vectorize their own creations or real-world references, resulting in higher-quality mods that feel like official content.

In simple terms: AIVE remains 100% true to the source. If the input looks real-world photographic, the vector stays real-world photographic. If the artist wants a cartoon or stylized look, that artistic intent is preserved perfectly.

“We are bringing this technology to the gaming world. Get ready to play the movie,” Schipperheijn said.

Bringing value to video games

Schipperheijn said these are further ways that AIVE can bring value to gaming:

- Realistic Player Avatars — Convert player photos into natural-looking vector representations for more lifelike in-game characters.

- Hollywood Film Integration — Transform cinematic movie footage into high-fidelity assets that give games a more authentic, film-like appearance.

- Enhanced DLSS Realism — Work alongside DLSS to correct artificial upscaling artifacts and restore natural textures and lighting for a more real-world look.

Origins

Schipperheijn said the vector tech has been in the works for 30 years. His experience in vector graphics goes back to the early days of Flash, which was a web-based Macromedia technology from decades ago.

Raster graphics is behind tech like special effects in movies. Raster is based on pixel-based images, made up of a fixed grid of pixels where each pixel has its own color. Scaling it up causes blurring or pixelation. It’s great for photorealistic images and complex color gradients.

Meanwhile, vector graphics is based on math-based shapes, built from paths, curves and formulas. Vector is resolution independent, so there’s no loss with scaling.

Raster graphics are used in movie special effects and game graphics textures, sprites and lighting maps. Vector graphics are used in movie titles or motion graphics and game user interfaces, fonts and 2D art, but they’re not often used for realistic art.

Flash was a tool that powered most of the internet’s early animations and browser games. It was a software platform and browser plugin that let creators build 2D animations, browser games, interactive web sites and video players using vector graphics and time-based animation.

Schipperheijn said he recalled a time when he worked from 2004 to 2006 and met with Jerry Yang, CEO of Yahoo, and presented a version of a kind of YouTube video service that leveraged a hybrid of the narrowband and broadband web.

“I built the vector system that would actually transmit 1080p, 30 frames a second, and still be under five kilobytes in broadcast streaming depth,” Schipperheijn said. “I built an entire interface for a 300 channel network that was a self-publishing network that would have competed against YouTube at that time. But Jerry Yang wasn’t overly thrilled with the direction we were going.”

Yahoo was distracted by biometric card tech that Schipperheijn developed to protect his secrets and they wanted to introduce it to Elon Musk, who had cofounded PayPal, but Schipperheijn wanted to focus on the vector engine instead.

“I built a narrowband/broadband self-Broadcast portal out of Flash and tried to sell the

system to Yang at Yahoo. [It was] called ‘Consumer Broadband Network.’ A sample can be seen halfway down the page at this link,” Schipperheijn said. “The original flash network I built was based on Flash vector which cannot reproduce life like visuals as can be seen in the demo images displayed halfway down my founder’s website linked above. No company in the world including Adobe has been able to produce the type of math needed to reproduce 100% lossless Vector Translation.”

After that, Schipperheijn transitioned his vector knowledge back to focusing on applying vector to biometrics. AI Vector Vision will later release the first vector biometric as part of its secure automation system. It will require biometric authentication to program of automation (both software and hardware) systems.

“We’re designing something that would be advantageous to all the hyperscalers, as well as the game industry. So it’s actually a dual potential vertical one for graphic and graphic analysis. The other thing is, it’s the world’s first 100% fidelity structured data outcome,” Schipperheijn said.

How it works

The tech is a visual translator that translates any input into traditional computer language, and AI Vector Vision does it at a level of fidelity that is a 100% match to the original input, Schipperheijn said.

“Now it’s been considered for many years to be impossible. You can actually type this current prompt into any AI computer and ask whether it’s possible to take unstructured data and convert it into 100% fidelity vector, and it will tell you that’s impossible,” he said. “That’s why I’ve been working on this for 30 plus years, and we’ve talked to a number of industry leaders that have tried to build vector translators as well. One of the biggest was Hewlett-Packard tried for about 10 years.”

They didn’t pull it off, nor did Adobe. Schipperheijn said he has done it, without using generative AI.

“We’ve figured out a way to solve the mathematics behind it. We are now delivering the world’s first translation of input data, visual input data, into 100% structured data,” Schipperheijn said.

At 100% magnification, Schipperheijn said the thing we should notice is the difference in lining up and matching pixel for pixel color and position from the source and compare that to both AIVE and Adobe and see which Exported Test PNG matches every pixel from the original source image.

He said AIVE is the only system capable of a 100% match.

“We have a special python software that performs three tests on very image we convert to SVG. It also allows us to test Adobe and and any other competitor as well,” he said.

At 300% magnification, Schipperheijn said you can now see how inaccurate all the competitors SVG conversions are. They draw detail that does not match and, in some cases, miss complete detail in the conversion to SVG.

He said you can see it in the test results of converting normal 12-point Text/Print.

“Conclusion, not even 1 letter converted to SVG correctly or legibly on the whole page. Summary detail is either not converted to SVG math accurately or is missing altogether. AIVE on the other produces a perfect image match at any scale weather you are dealing with low-res images or text and matches that same perfect result with images up to 8k high-res,” he said.

And he added, “AIVE is the only system that would enable life like visuals game play from Hollywood graphic assets. These new converted SVG assets can be imported into Unreal

engine enabling game play of your favorite movie. A whole new revenue stream for movie licensing to the game sector. Or AIVE can be added/replacement layer in Nvidia DLSS 5 system to create a the real-world look that the technology was design to output.”

Right now, about 90% of all data sitting inside of these hyperscalers, like the large language models, is unstructured. So it’s a real disadvantage. The computer doesn’t understand what it’s looking at. It doesn’t understand how to find it,” Schipperheijn said. “You have to use addressing system, mapping systems to all the unstructured data. That’s what the majority of the volume of a large language model is.”

That’s the bottleneck of the future of retrieval and understanding for a computer, he said.

In games, the vector tech could replicate real world imagery. It can be used to capture high-dynamic range, or high contrast imagery that makes shadow and lighting effects better.

“I can reproduce any quality, and our latest tests have been on HDR 10 plus. So the highest high definition, 4K resolution imagery can be 100% converted into mathematical state and retain 100% of the features that are characteristic of HDR plus itself,” Schipperheijn said. “It’s like a contrast map. Nvidia was trying to build with with their DLSS 5. They’re trying to build something to sit on top of the original vector. The gaming industry uses vectors, but it doesn’t look like the real world. That’s been the problem: to transition from the game appearance into the real world appearance, and that’s obviously what we’ve achieved here.”

Schipperheijn added, “We do not use AI to accomplish this. That’s completely the difference between us and Nvidia. Nvidia is an afterthought that is applied afterward. Once the game has been rendered, we would go in and re-render the game before applying it to this particular category.”

How Schipperheijn’s tech got there

Key Insight (Set 1 – DLSS 5 Off): AIVE 2.0 maintains excellent fidelity to the original game render, preserving realistic skin tones and subtle details even at high magnification. Adobe Illustrator introduces noticeable blockiness and loss of realism.

Schipperheijn said his team didn’t set out to do this in the first place as an “over mapper,” to take old content and regurgitate the old content into something that still looks a little closer to reality. By contrast, those who have looked at Nvidia’s DLSS 5 up close — even among game developers — have noticed it looks artificial, kind of the way that many generative AI images look fake.

Nvidia’s researchers who showed me the tech at the recent GTC 2026 event said they didn’t want to change the “artist’s intent,” meaning they used the same underlying polygons, or 3D mesh, that gives structure to the 3D imagery overlaid on it. But in changing the textures on the mesh, people still saw big differences, like the insertion of wrinkles on faces that weren’t there in the original art — making someone look older.

“We would try and focus on bridging Hollywood with the gaming sector by being able to render Hollywood’s leading titles in a mathematical state so that they could be dropped into effect within, say, the Unreal Engine, for example,” Schipperheijn said.

He added, “If we’re talking about engines for the video game industry, we would build a bridge translator, and you would be able to produce, literally, the [James Bond] Moonraker movie could be literally translated into a game. And literally, you would then be playing Roger Moore as your character, and it would look a lot more like Roger Moore than anything else ever drawn in a video game to this point.”

He said, “That would be the direction we would probably play in, because it’s a lot easier for us as the content is already rendered. All we’re doing is the translation into mathematics, and then at that point, it can be modified. It can be integrated into the command structure for video game play.”

The company formally incorporated in June 2025 in Brookville, New York, and it has filed for three different patents. AI Vector Vision has raised $250,000. That’s not much, but Schipperheijn is looking for good momentum to come from his disclosures.

It’s not procedural tech

This tech is different from the math in procedural generation of landscapes like in games like No Man’s Sky, Schipperheijn said.

“I’ll be honest, it’s a difference in the math and kinematics itself,” he said. “Our math is able to retain the original intent of the raster input itself, literally flawlessly. As a matter of fact, our converter goes through a three-pass verification process. So what we do is we translate the input, whether it’s film or whatever it is. We translate that input into mathematics, and then we actually take that mathematics and we re-export it back into raster so you can do a actual apples to apples comparison to determine how accurately you’re retaining the mathematics from the raster’s perspective.”

He added, “The other interesting thing is, within the chain of creation, we can bounce back and forth between raster and vector at any given time. Right now, there’s not a lot of vector special effects design because this technology is just coming to market.”

Schipperheijn said the company is trying to hold talks with bigger corporations like the hyperscalers in the space first. The company can focus on games or video players as well. The company’s file format will be backward ompatible with the industry, and the company is building a database structure for the first time that will alleviate 90% of the unstructured data.

“The mathematics appear structurally to replicate a raster image. So literally, the computer is seeing exactly what a human is seeing, thus bridging the awareness level between a computer and a machine to be similar in terms of vision. And that’s a that’s a major step forward,” he said.

Getting to the market

As for the company, Schipperheijn said he didn’t form a company for years. He worked as a physcist and developed a number of other technologies along the way. He worked on early hologram tech. His team has about six people.

“Once a hyperscaler realizes that vector has been cracked, there’ll be an awful lot more attention on us,” Schipperheijn said. All the big players have all tried to build vector databases now. they’re building vector databases right now. They call them a vector database. Their terminology is antiquated. We’re also building a vector database, but the difference is we’re not using vector to map the location to unstructured data. We’re using vector as the actual translation infrastructure data. We don’t put unstructured data in our database. We’re going to have completely different AI.”

Schipperheijn added, “We start off with a converter, and we have a file format, then we have the database structure, and the final conclusion is building an AI. And that’s what we’re doing with Lucid. We’re going to be training Lucid as the world’s first complete visual AI, and our AI will be able to draw natively in vector.”

The final form of the tech will be an AI, but at the moment it is a file format that could be adapted for a game engine like Unreal.

“The outcome is completed engineering and everything is done. You know, we’ve already developed this so we can fit into a stack in multiple different formulations. So we’re ready for the next move. We are considering a money raised just to help us build the awareness level,” Schipperheijn said. “I’m counting on the interest spiking here.”