There’s been a rise in AI-driven scams that target gaming brands on social media, according to social media security company Spikerz Security.

Spikerz tracked more than 19 million attempts to defraud gamers in 2025 as well as a spike in impersonation and AI-driven scams. As a result, the gaming industry is facing

unprecedented challenges.

Spikerz said that one in five users of major gaming and betting platforms have encountered scams, and 10% of them have actually fallen victim to at least one. It also said that for gaming brands, every 100,000 social media followers translates to an estimated $87,000 lost to impersonators stealing players.

New findings by social media security company Spikerz have uncovered how gaming brands became prime targets for fraud in 2025, and they highlight the three most damaging scams of the year.

For years, gaming studios have treated social media as their primary channel for growth, community building, and creator collaboration. But in recent years, those same platforms have become one of the biggest operational risks. With Grand Theft Auto, Minecraft, and Call of Duty among the most exploited games, it’s clear that cybercriminals are actively following gaming trends to reach their targets, Spikerz said.

The scale of scams across social and digital platforms takes us back to that staggering stat: one in five users of major gaming and betting platforms have encountered scams, and 10% of them have actually fallen victim to the scams, said Uri Schechterman, chief revenue officer at Spikerz, said in an interview with GamesBeat.

With over 3.3 billion gamers around the world, that’s tens of millions of people being scammed out of their money, with the blame usually falling on the gaming brands for failing to keep players safe.

Furthermore, there were over 19 million attempts to download malicious or unwanted files disguised as popular games in the past year, with social media as one of the

most common attack vectors used to target gamers.

Gaming brands with large followings face significant financial exposure: every 100,000

followers translates to an estimated $87,000 per year lost to impersonators stealing players. (That is based on a 5% engagement rate and just 1% of engaged users being stolen by an impersonator posing as a brand and deceiving players. Spikerz calculated this using a $25 customer acquisition cost and a $120 lifetime value).

But the cost isn’t just financial. When a brand account is hijacked, player trust drops instantly, new users churn during critical GTM moments, algorithms suppress compromised content, support tickets spike, creator relationships strain, and launch

momentum collapses.

“Social media used to be where gaming communities came together,” said Naveh Ben

Dror, CEO and cofounder of Spikerz Security, in a statement. “Now it’s where they get scammed and feel unsafe. The threats have outgrown the platform’s security capabilities, which means there needs to be a complete rethink of how brands protect their players.”

Enter Spikerz

Spikerz got started in 2022 and it provides comprehensive protection for businesses whose digital channels generate value and are mission-critical. It offers brand protection, impersonation management and comment protection.

The firm empowers companies to continue growing and monitoring social media while being protected at the same time. The firm offers a dashboard that summarizes asset risks, all in one place. It monitors those assets for violations of policy that could mean hacker attacks and penetration. It offers brand protection, impersonation management and comment protection.

Spikerz has about 18 employees at its headquarters in Tel Aviv, Israel, and it has raised $7 million to date, said Ben Dror, in an intervew with GamesBeat.

Paul Eibeler, former CEO of Take-Two Interactive and veteran gaming industry executive, said in a statement, “For years, gaming studios viewed social media as a driver of growth, community, and creator collaboration. But the same platforms that powered this momentum have become one of the industry’s most significant risks.”

He added, “The threat has existed for a long time, yet today it’s more real and more immediate than ever. As game makers, we have a responsibility to ensure that our gamers aren’t exposed to scams or account hacks carried out through our brand accounts. Protecting them is essential to preserving the trust that defines this industry.”

“Think of us as an alarm company. But we monitor the windows and doors and the floor and the inside of the house, even after somebody got the permission to go inside — we know that somebody can go rogue, even after they get the permission to come inside the house,” Schechterman said.

Schechterman said that he believes Spikerz fits fits nicely in closing in the gap and the blind spot for social media, specifically security for social media.

“The company was founded based on a need. [Ben Dror] was a marketing manager for a brand that had several issues with social media and people hacking or attempting to hack their comment sections. And that’s something we see often with gaming companies being exceptionally toxic. They impersonate and hijack customers and players to other sites.”

Ben Dror wanted to find a solution and he partnered with a couple of cyber experts and started Spikerz.

Why game companies are getting hacked

A lot of people think they’re secure when they use two-factor authentictaion, like using a smartphone message to verify a password change on a web site. But too often it is easy to duplicate a person’s SIM card and then intercept the verification message.

“It’s not hard to do that these days,” he said. “We scan every attempt and we block it on the spot.”

The company uses AI models to detect the anomalies where people are trying to login or do something disruptive on comment boards, like leaving recipes in Spanish on a tech site. The system will flag special cases for humans to decide whether to spike or allow a comment. The content can be taken down almost immediately. This can be done with a small company or one with more than 100 social media managers, Schechterman said.

Game companies stand out because they are targeted because of their high numbers of players and high engagement. They need their channels screened for toxicity and people like to impersonate them. The company makes money through its enterprise subscriptions, where annual plans start at $15,000. The company said it is protecting more than 5,000 brands, Schechterman said.

“The whole idea is basically here is to do three things. One, stop hacking. Stop brand impersonating and protect against any account takeover in social media. These are things that you see happening recently with a lot of crypto scams. It happened to Mr. Beast and happened to so many different celebrities and companies,” Schechterman said.

Schechterman said the company has an “AI scanner” that can see all of the manipulations that bad actors are trying.

“We can flag it to those companies way before those [bad actors] catch any momentum, and we can take them down,” Schechterman said.

And the company controls the comment section so it doesn’t become a cesspool of hate and scams.

For the comment section, Schechterman said, “We can create those AI tools that, within milliseconds, will take down those comments.”

Riot Games’ League of Legends had a multi-layered breach

In March 2025, the global League of Legends community woke up to a surreal announcement: an “official” Riot-backed cryptocurrency, promoted simultaneously on

the game’s Instagram account and on X via a lead designer’s compromised profile. What made the incident pivotal wasn’t just scale, it was the dual compromise of both brand and employee accounts.

“This type of blended attack is the new norm,” said Ben Dror. “Hackers exploit player trust. A message from a game designer feels credible, and when brand and creator accounts reinforce each other, it looks official.”

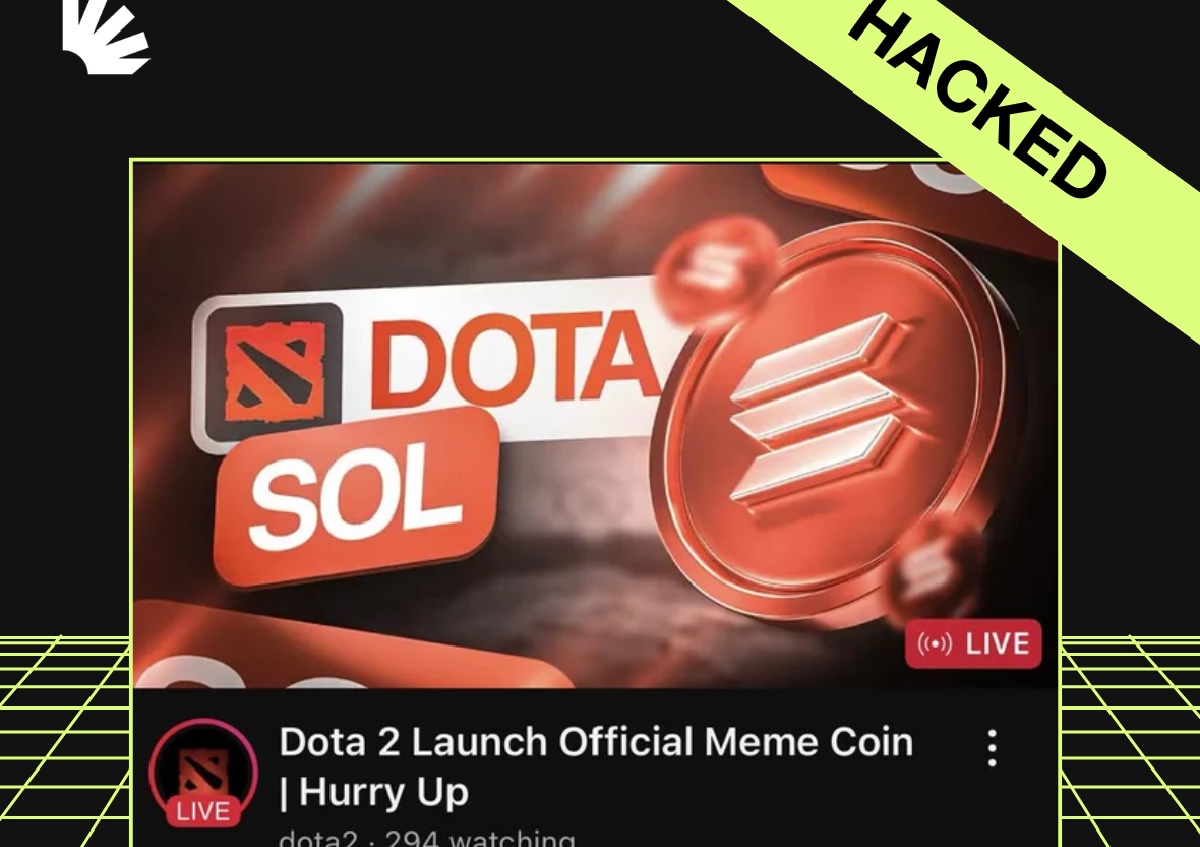

Esports World Cup, ESL, Blast Premier & Dota 2: A coordinated breach like the gaming world has never seen

The October 2025 attack on major esports YouTube channels demonstrated how quickly a unified exploit can spread. Attackers commandeered multiple channels and streamed a pre-recorded Gabe Newell talk overlaid with a QR code tied to a phishing site [5]. Streams reached more than 60,000 viewers within minutes, and thousands of fans were exposed to fraudulent meme-coins before the accounts regained control. The incident sent a clear message that the ecosystem around a game, including esports, creators, and partners, is just as vulnerable as the studio itself.

Schechterman noted that some big game companies have recently gotten hacked. It happens because the security protocols of those companies are likely to be similar to what they were five or ten years ago.

“Not much has changed,” Schechterman said. “Their systems were built for individuals. They weren’t built for teams. And they weren’t built for social media. Security will come at the expense of engagement because engagement is far more lucrative. So it’s like the perfect storm. On the one hand, it’s easy to break in.”

Once the bad actors break in, they announce tha tthe big companies is launching a cryptocurrency and then a swarm of users comes in to try to get in on the investment opportunity. And then they’re duped. The bad actors can make millions in minutes.

“It’s happening to everybody. We’re talking to a lot of those companies,” Schechterman said. “Long story short, we’re here to close the gap. We make sure they never get hacked. And we ensure their social media channels are very strong, and we want them to own the narrative for their voice and their brand, not the hackers.”

Stellar Blade: Extended takeover & content manipulation

The Stellar Blade hack in July 2025 evolved beyond a one-time compromise [6].

Attackers:

● Promoted fake “Stellar Blade Coin” drops

● Launched external phishing websites

● Disabled comment sections to silence warnings

● Posted NSFW art to drive engagement and lure clicks

This was the first major case where attackers blended brand-appropriate content style

(fan art) with malicious objectives, setting an alarming precedent.

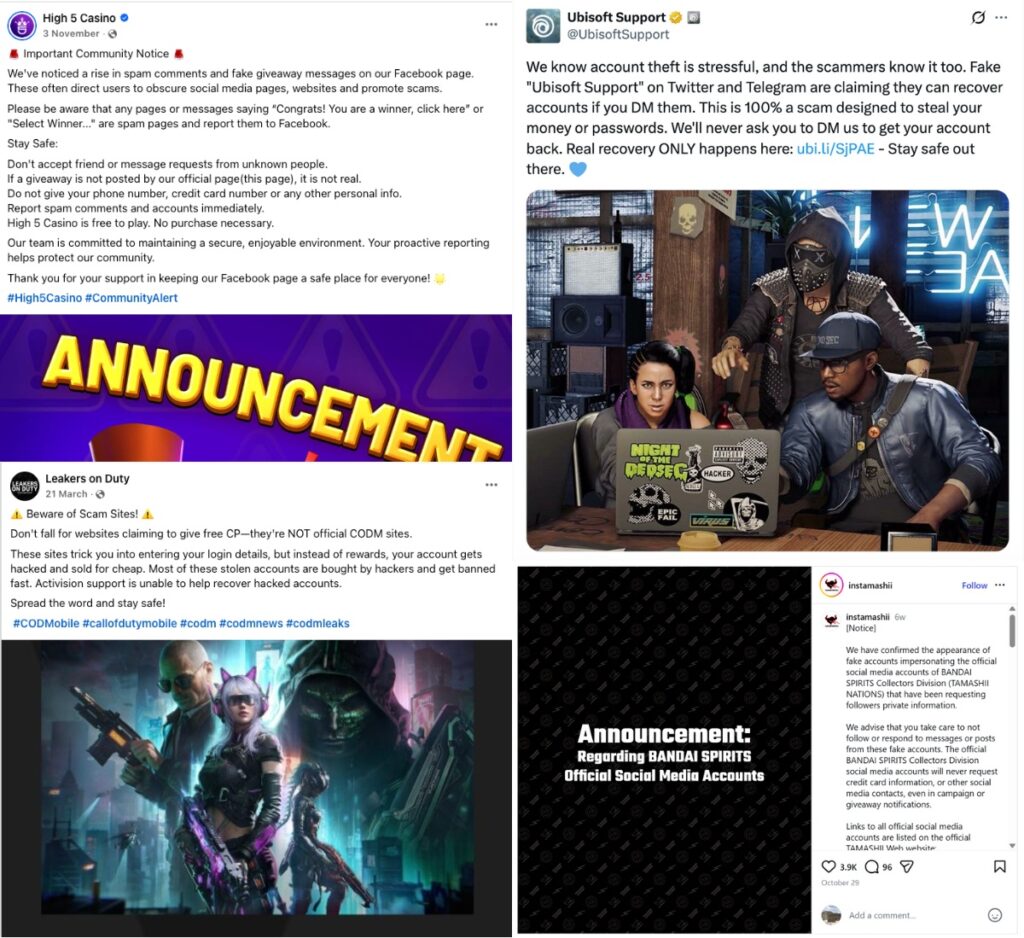

Comment Sections Are the New Trap

Brands suffer from major waves of bots and fake accounts scamming their players.

Many household names in the industry are regularly under attack, with bots deceiving

their players and sending them to links, recruiting them into Telegram groups, and

offering “free tokens” for games.

Announcements have been published by many of the world’s largest gaming brands, including Ubisoft, Leakers on Duty (source for Call of Duty leaks), and Tamashii Nations, alerting players about a wave of scam attempts using the game’s identity, designed to steal money and personal data from users.

“We’ve seen so many gaming companies sharing posts on their social accounts, trying to warn their audience about scammers and spammers exploiting their comments. The issue is pervasive and impacts games across all genres,” explained Ben Dror.

The 5 threats gaming CMOs can’t ignore going into 2026

The industry’s exposure is now too large, too public, and too fast-moving to rely on

traditional social media habits. Protection has to match the speed and sophistication of

the threats targeting both studios and players.

Community hijacking & traffic diversion

Scam links, phishing attempts, bot replies, and toxic language now follow predictable

patterns in comment sections, especially in the first minutes after a post goes live. This

is when visibility is highest and defenses are weakest, making comments a prime

surface for traffic diversion and trust erosion rather than engagement.

How to address it: Don’t rely on people manually reviewing every comment. Create a shared list of blocked keywords that every brand account uses, and turn on each platform’s built-in filters and auto-moderation by default. Decide which comments should be removed immediately, and which can wait for review. This way, teams aren’t making judgment calls in the moment, and comment sections stay under control, even during spikes.

Operational drag on community and marketing teams

The real cost of social abuse is not the volume of incidents, but the repetition of

handling the same problems across platforms, brands, and regions. Without shared

rules and workflows, every scam, toxic comment, or impersonation case becomes

manual, slow, and inconsistently handled.

How to address it: Social teams shouldn’t be reinventing decisions every time something goes wrong. Define one clear operating policy that spells out who removes scams and toxic content, who escalates serious issues, and who updates internal stakeholders. When everyone follows the same rules, teams move faster and avoid repeated debates about similar cases.

Social media security: brand-level visibility & organization

Most social media security failures stem from fragmentation rather than external attacks. Large gaming companies often operate multiple pages, regions, studios, and agencies under the same brand, with access and responsibility spread thinly and unclearly.

How to address it: Not all social platforms work the same way. For platforms with shared logins (like Instagram, TikTok, and X), the password, email address, phone number, and two-factor authentication should all belong to the business, and be stored securely in one central place. Team members should get access without ever seeing or changing the core login details.

For platforms with role-based access (like Facebook, LinkedIn, and YouTube), define roles clearly, give people only the access they need, and review access regularly to remove outdated or unnecessary permissions.

Social media security: access rules & takeover readiness

Access audits alone do not prevent incidents if there is no plan for what happens next. Many brands know who has access, but lack rules for how access is granted, shared, or

revoked, and have no defined response when access is compromised.

How to address it: Monitor access continuously, and remove access immediately when someone changes roles or a vendor relationship ends. Rotate shared-account passwords on a set schedule, such as every 60 days.

At the same time, create a simple incident response plan. It should clearly state how a takeover is detected, who can lock the account and reset credentials, how the wider team is notified, and which ads or campaigns are paused until the account is secure again.

Brand impersonation & player scams

Impersonation is no longer random or rare. Fake accounts, clone pages, and scam

campaigns repeatedly reuse the same names, visuals, and messaging, yet many

brands still treat each case as a one-off incident.

How to address it: Regularly search social platforms for your brand name, product names, game titles, and common support-related terms. Track every impersonator in a simple system, even if it’s a shared spreadsheet, showing status and next steps.

Dealing with comments

I asked if there was a way to handle 600 comments on a social media post. Schechterman said, “The crazy part is the answer is yes. What we do is give the LLM examples. These are legit comments that you should not delete. And we can actually put those examples into the system and it learns it. Some of those giveaways on the toxic comments can be extra anti-semitism or extra racism. There are a lo, and you know, you f in this and F in that, and this company is better.”

Some of the comments that should be deleted are hateful, and some are repetitive. Lots are simply irrelevant to the discussion at hand.

“We can easily identify that and take it down,” he said. “I would say 95% of what we remove is undisputed, like no controversy whatsoever. But the 5% is down to perhaps 10 or 20 comments on a post. Then you can decide on your own how you want to flag it for leaving it on or deleting it manually.”

Ben Dror noted that Dota 2 got hacked recently and, at the same time, one of the esports leagues for the game got hacked as well.

“They hacked their organization and started posting on their behalf of a crypto scam. The reputation [problem] there is extreme. Now they may want to talk about security, but they also want to promote something,” Ben Dror said. “As a gaming company, you basically want to create games and create engagement. You don’t want to handle security answers and brand reputation risks. We basically take this off your plate.”

Ben Dror added, “If this is the number one risk to your brand, and this is the number one place all your friends are going to, it’s a matter of not if but when because you just don’t have the protection tools in place to do this yourself as a company.”

“There’s no human way possible for them to deal with the impersonations and the volume that they’re expecting,” Schechterman said. “A good example is someone pretends to be a support person and they’re helping with a technical problem. It’s hard to get hold of a human for such conversations, and so players may welcome a call from ‘tech support.’ They will offer credits and then ask for someone’s credit card information so they can send the credits.”