LTX said it is launching a new AI model that can generate high-quality videos without incurring huge AI processing costs.

LTX said the promise of AI-generated video has come with an asterisk. You could produce stunning content in minutes, but only if you were willing to pay per generation, send your intellectual property and workflows to a third-party cloud, and accept that your creative process lived somewhere else.

For individual creators, that was a friction point. For enterprises protecting proprietary IP and trying to manage costs, it was a dealbreaker. If AI tokens cost a lot of money and require cloud data centers, AI usage becomes too costly. That constraint is now gone, said LTX. With the new model, you can generate videos for free.

Today, LTX is launching LTX-2.3, a 20.9-billion-parameter multimodal AI model capable of generating production-quality video at up to 4K, running entirely on consumer-grade GPUs, with no cloud connection required. The model weights are open and free to use; companies generating over $10 million in annual revenue require a commercial license.

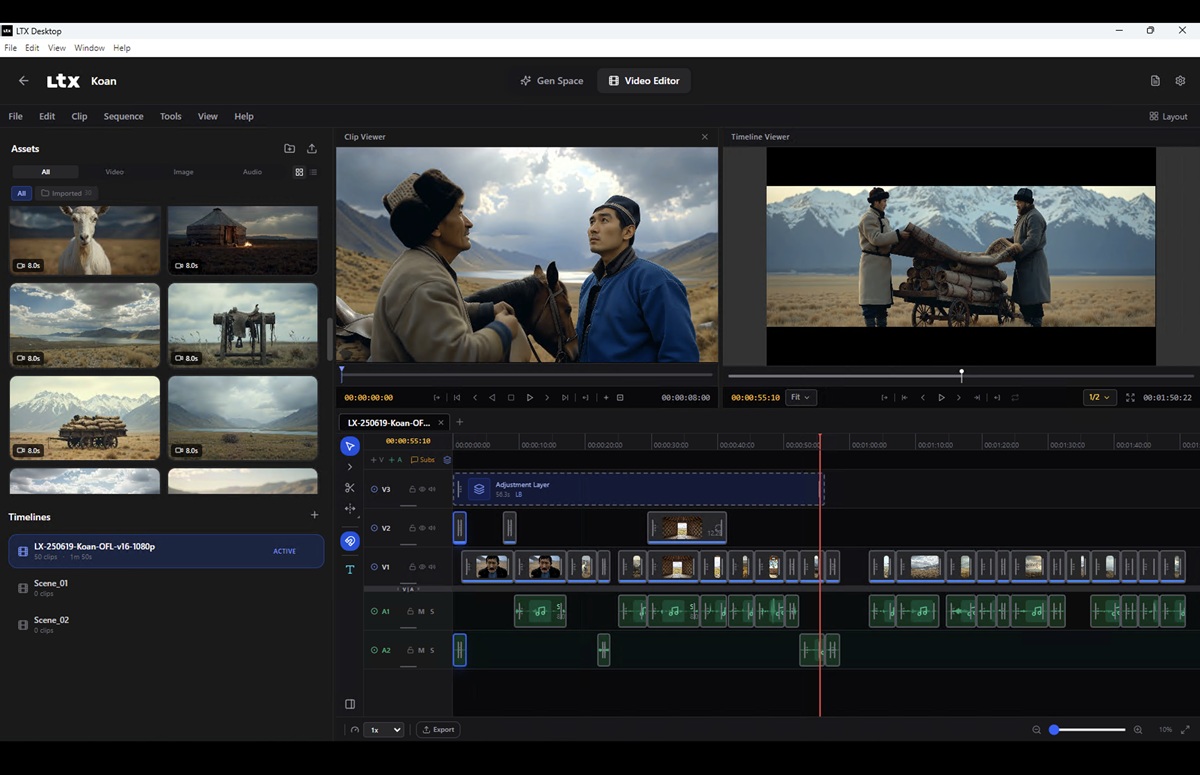

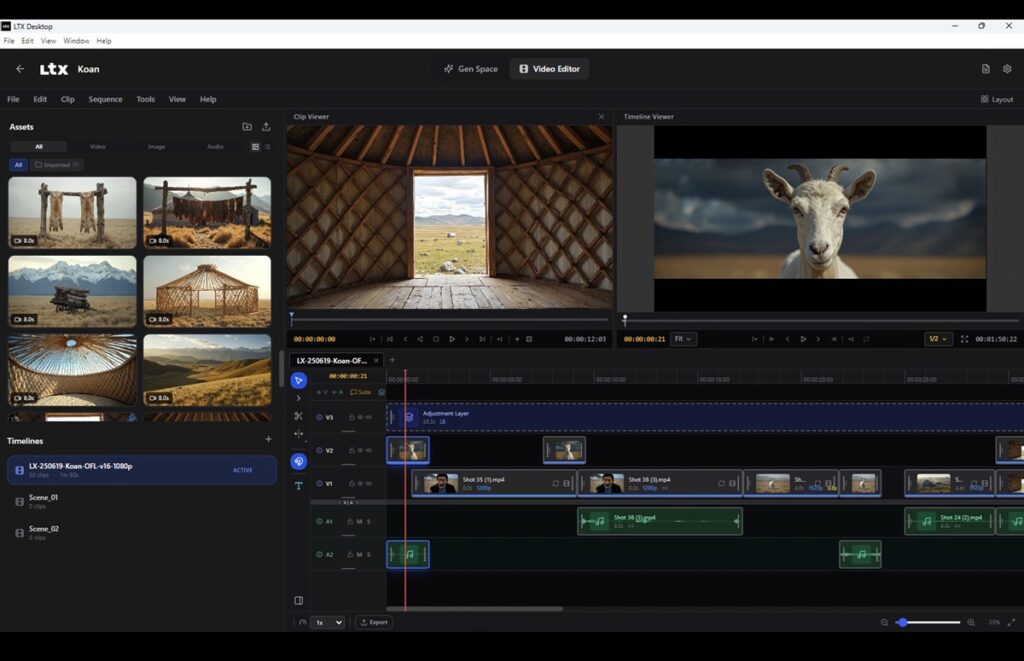

Alongside it, the company is releasing LTX Desktop, a professional video generation and editing application built entirely on the LTX engine, free and open source under the Apache license.

The implications for enterprise users, the broader creative economy, and investors are significant, the company said.

Local inference changes the math entirely

The cloud-based model for AI video generation made economic sense when the compute requirements were too demanding to run anywhere else. That era is over. LTX-2.3 runs locally on hardware that studios and serious creators already own, with no API calls, no per-generation fees, and no data leaving your machine. And it does that at production-level quality.

This is a fundamental shift in the cost model. For a production studio iterating on dozens of visual concepts in a single session, the difference between zero marginal cost and per-generation pricing is the difference between creative freedom and significant budget constraints.

For enterprises deploying AI video workflows at scale, eliminating cloud dependency means eliminating a category of recurring infrastructure spend entirely, while also removing API rate limits and vendor lock-in. As an open-weight, local model, users do not have to send their IP to the cloud, and they can fine-tune it to fully meet their exact needs, eliminating the challenges around black box limitations.

LTX-2.3 also runs at one-fifth to one-tenth the compute cost of leading models, making it viable for high-volume production environments.

A meaningful step toward democratizing video production

High-quality video production has historically required significant capital for expensive software licenses, cloud compute budgets, access to numerous GPUs, large technical teams, or access to a major studio’s infrastructure. Both paths were closed to independent creators, small studios, and companies in markets where dollar-denominated SaaS pricing creates a structural barrier.

LTX Desktop removes those barriers, empowering many people who previously did not have access to powerful tools. LTX-2 was downloaded more than three million times from Hugging Face in its first month and is approaching five million as of today.

This release extends that reach with a full desktop application designed for professional workflows. The addressable market of individual creators, production companies of all sizes, marketing teams, educators, and developers in emerging markets is now a realistic user base.

Quality and capability to match the ambition

Democratization only matters if the output is worth producing. LTX-2.3 was built to clear that bar. The model features a new text connector for improved prompt adherence, a new variational autoencoder (VAE) that preserves fine visual detail, native portrait-mode support, and substantially improved image-to-video generation. Audio generation has been refined through cleaner training data, with native audio output included alongside video. The architecture is multimodal at its core: one model handling text, image, audio, and video as unified inputs and outputs, not separate tools bundled under one subscription.

An open platform built for builders

LTX-2.3 is being released as open weights, with model files publicly available on Hugging Face. A forthcoming CLI will enable developers to run LTX locally and build directly on top of it. LTX Desktop shares the same open model, with a public roadmap and continuous community-driven development.

For companies building creative tools, content pipelines, or AI-powered media workflows, the engine powering LTX Desktop is available for licensing and integration—a production-quality multimodal foundation without the R&D cost of building one from scratch.

LTX Desktop is available today. The model weights are on Hugging Face. The repository is open. The developer CLI is coming soon.

Established in 2024, LTX is an AI company developing production-ready creative infrastructure for enterprises, studios, and professional teams. Its tools integrate directly into production workflows, enabling high-fidelity, scalable creation while preserving control over process, workflows, and IP.

LTX has been fully funded to date by its parent company, Lightricks, whose investors include Insight Partners, Goldman Sachs, and Viola.